[ad_1]

In context: Synthetic normal intelligence (AGI) refers to AI in a position to expressing human-like and even super-human reasoning skills. Often referred to as “robust AI,” AGI would sweep away any “susceptible” AI lately to be had available on the market and berth a brand new technology of human historical past.

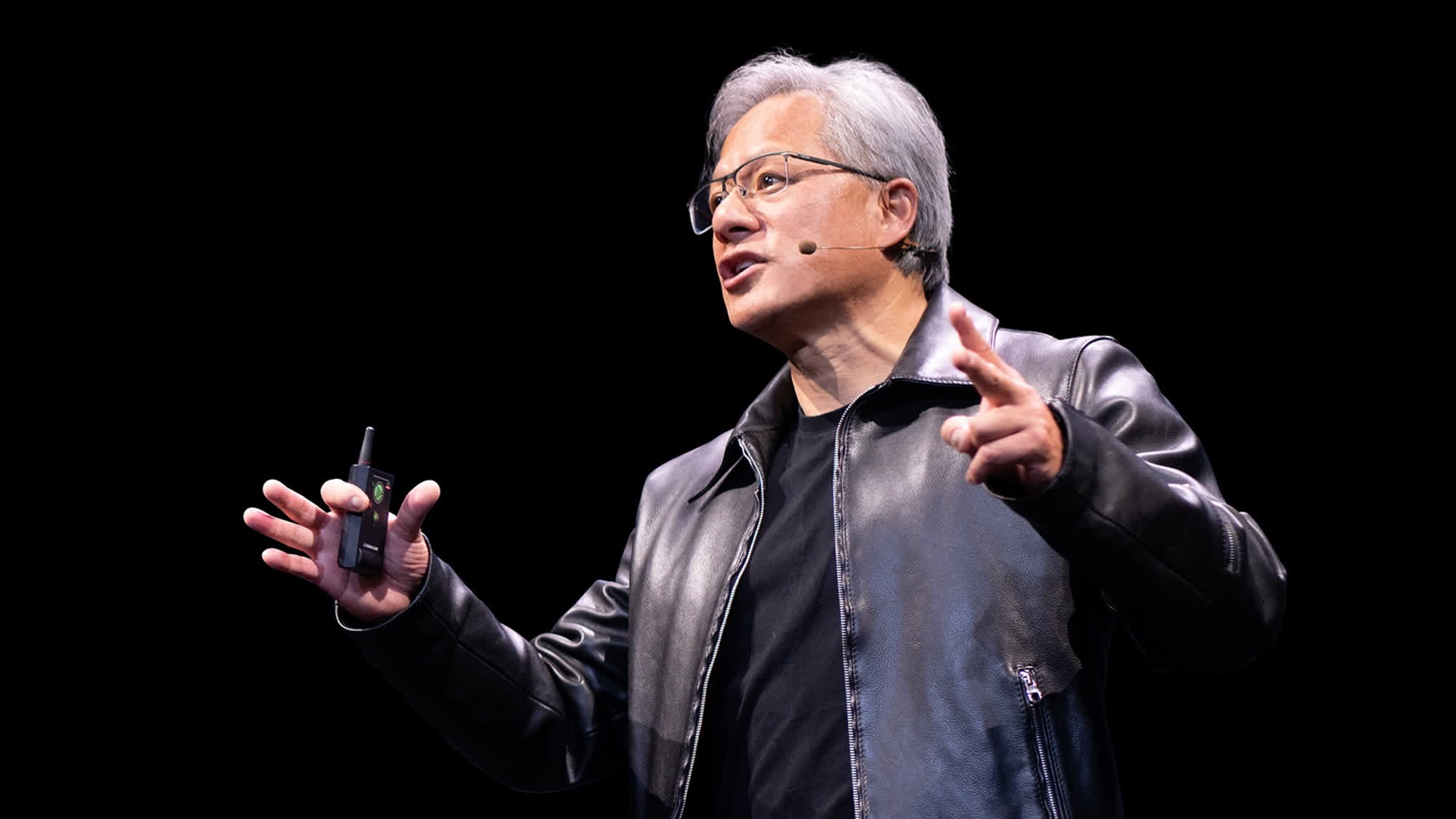

All over this yr’s GPU Generation Convention, Jensen Huang talked about the way forward for synthetic intelligence generation. Nvidia designs the vast majority of GPUs and AI accelerator chips hired these days, and folks ceaselessly ask the corporate’s CEO about AI evolution and long run potentialities.

But even so introducing the Blackwell GPU structure and new “superchips” B200 and GB200 for AI packages, Huang mentioned AGI with the click. “True” synthetic intelligence has been the subject of contemporary science fiction for many years. Many assume the singularity will come quicker slightly than later now that lesser AI products and services are so affordable and out there to the general public.

Huang believes that some type of AGI will arrive inside of 5 years. Alternatively, science has but to outline normal synthetic intelligence exactly. Huang insisted that we agree on a selected definition for AGI with standardized assessments designed to show and quantify a device program’s “intelligence.”

If an AI set of rules can entire duties “8 % higher than most of the people,” lets proclaim it as a undeniable AGI contender. Huang recommended that AGI assessments may just contain criminal bar assessments, good judgment puzzles, financial assessments, and even pre-med assessments.

The Nvidia boos stopped wanting predicting when, or if, a human-like reasoning set of rules may just arrive, despite the fact that participants of the click frequently ask him that very query. Huang additionally shared ideas on AI “hallucinations,” a serious problem of contemporary ML algorithms the place chatbots convincingly resolution queries with baseless, scorching (virtual) air.

Huang believes that hallucinations are simply avoidable by way of forcing the AI to do its due diligence on each and every resolution it supplies. Builders must upload new regulations to their chatbots, imposing “retrieval-augmented technology.” This procedure calls for the AI to match the information came upon within the supply with established truths. If the solution seems to be deceptive or non-existent, it must be discarded and changed with the following one.

[ad_2]

Source_link